Serverless and FaaS (Function As A Service) is not a new buzzword anymore, SaaS industry is quickly adopting this cloud computing paradigm as it brings immense gain. The serverless market is expected to reach $7.7B by 2021.

The plan of this blog is

- Brief introduction of serverless.

- Some stats on the serverless technology landscape.

- Why choose Knative and introduction about Knative

- Why hybrid apps are the way to go.

What is serverless?

To understand serverless we need to understand the cloud computing evolution till now.

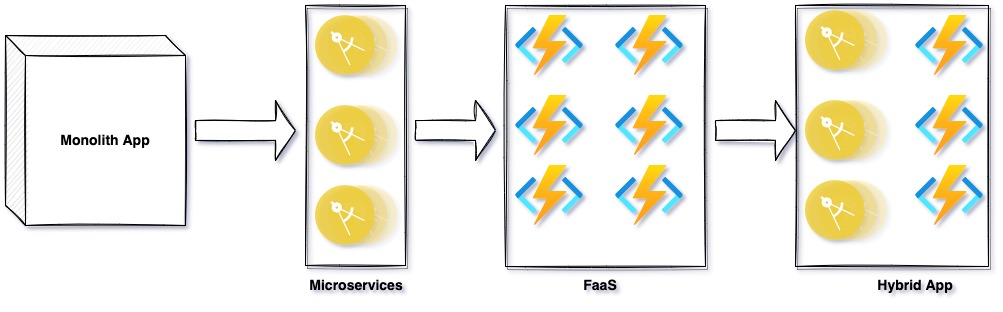

First Monolithic applications were built as a single unified code unit deployed in a traditional server or a virtual machine, they are complex to manage and scale.

Then came the Microservices where an application is divided into smaller independent deployable services, and containerisation helped in managing and scaling the microservices to a certain extent, Still developer has to take care of infrastructure deployment configurations.

Serverless was the next cloud computing architectural model that allowed developers to only focus on writing application logic without worrying about the underlying infrastructure.

Benefits of serverless

-Lower TCO- Serverless computing provide on-demand scale up and scale-down of computing resources hence it is cost-effective as compared to traditional server allocation which often results in paying for unused storage and compute resources.

- Simplified scalability - Developers using serverless architecture don’t have to worry about policies to scale up their code. The serverless handles all of the scaling on demand.

- Simplified backend code - With FaaS, developers can create simple functions that independently perform a single purpose, like making an API call.

- Faster GTM - Serverless architecture can significantly cut time to market. Instead of needing a complicated deployment process to roll out bug fixes and new features, developers can add and modify code on a piecemeal basis.

Current serverless landscape

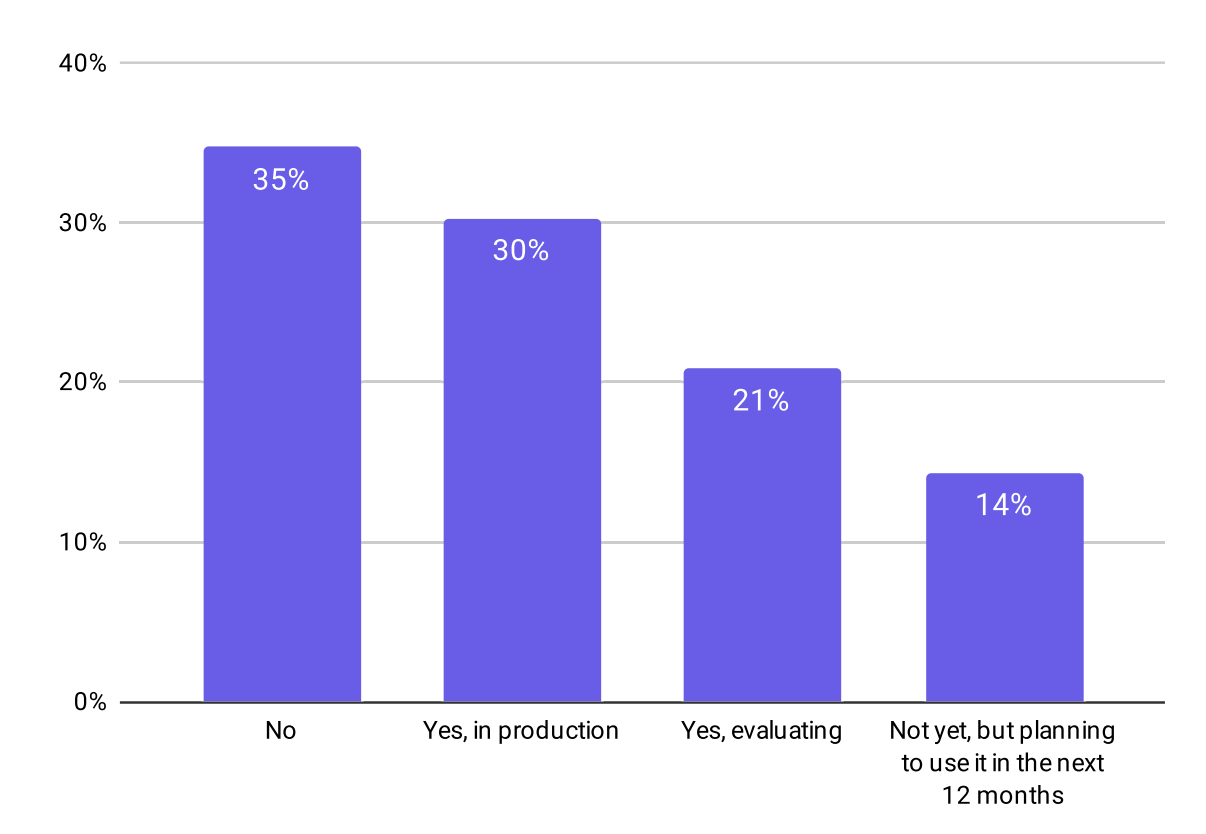

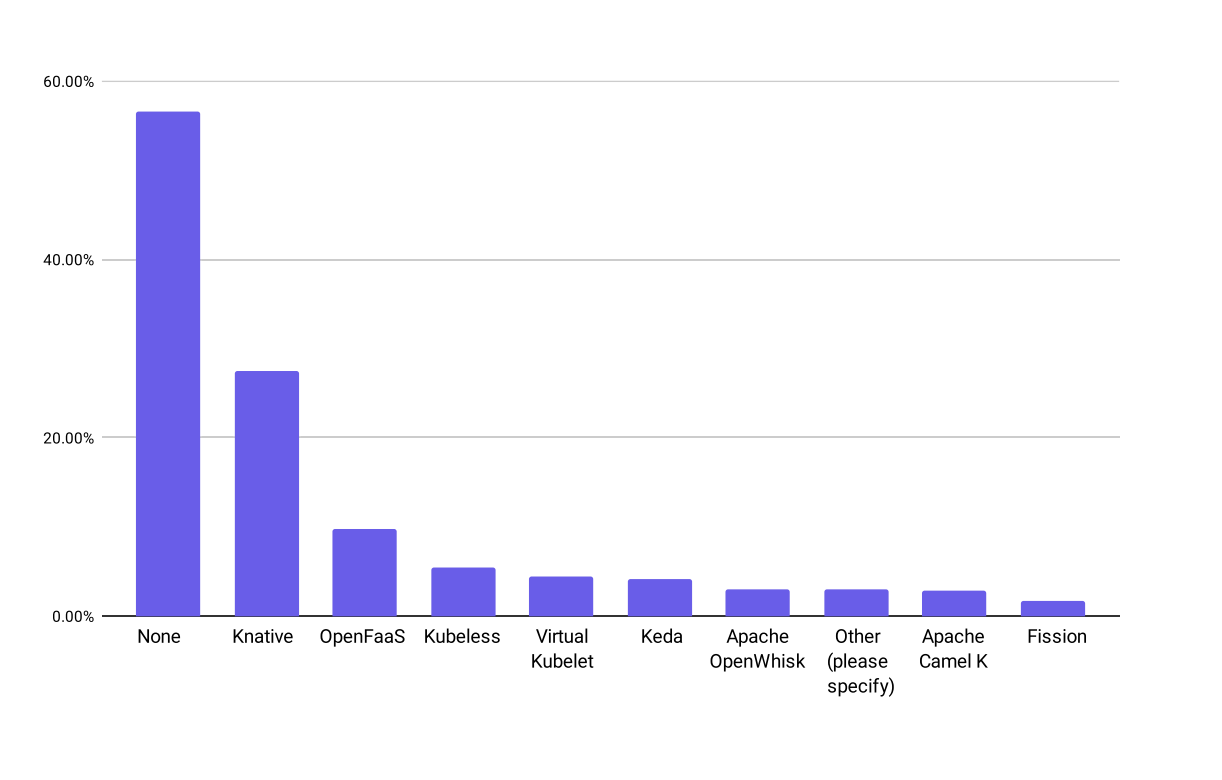

As per the CNCF(Cloud Native Computing Foundation) survey done in 2020 around 30% of the respondents already use serverless technology in production and another 35% are in evaluation or going to evaluate serverless in the next 12 months.

The serverless technology can be used in below two ways:

Hosted platform

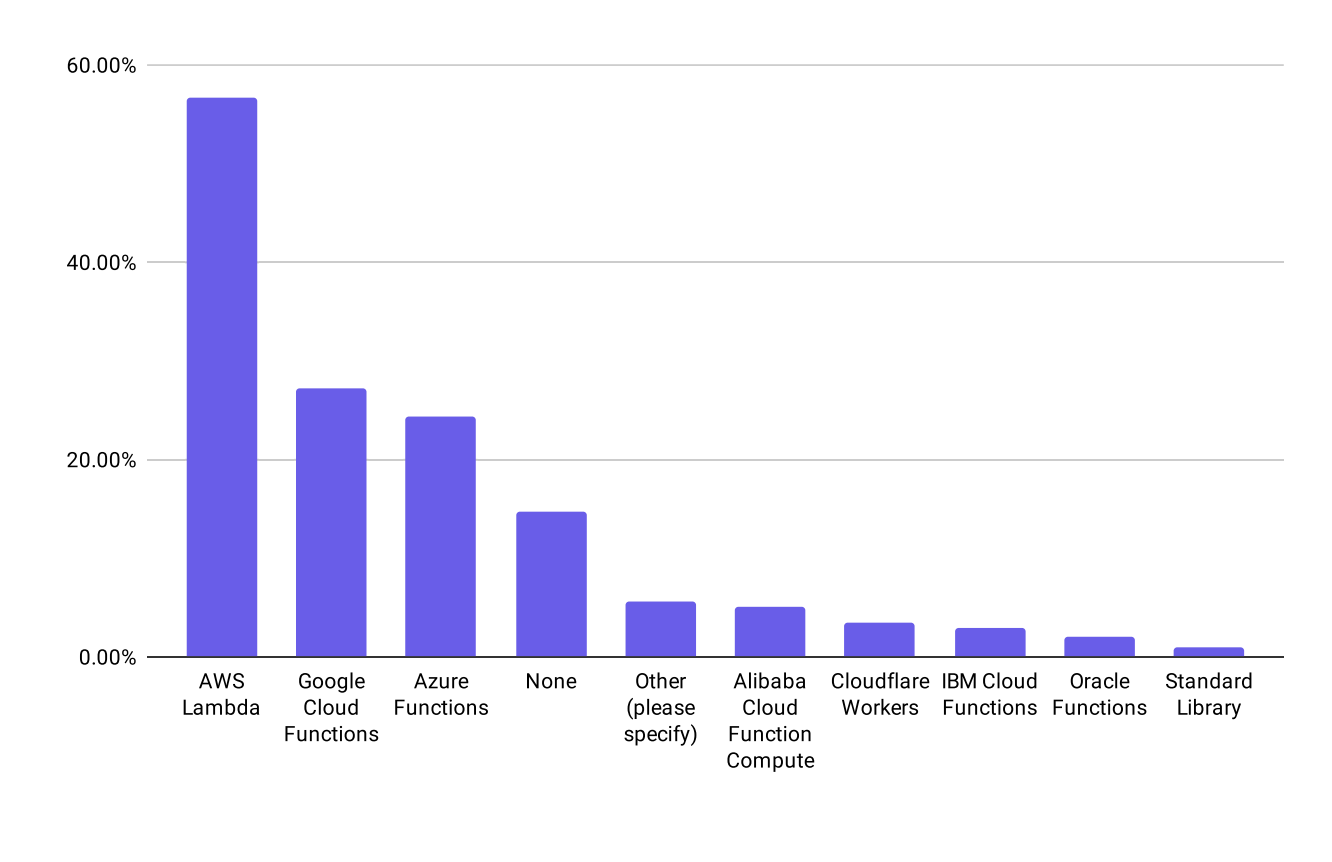

Cloud Vendor provides these serverless platforms which can be directly used by developers. They are mostly billed on per function call basis. A few well-known hosted serverless platforms are AWS Lambda, GC Function, Azure Functions, etc. They are a very quick and easy-to-use serverless solution but will be a vendor lock-in and non-interoperable across cloud vendors

Installable software

This is a cloud vendor-neutral approach where additional software needed to be installed in the infrastructure to provide serverless features. Serverless application built on this does not suffers from vendor lock-in and can be migrated to other cloud vendor or on-premise platforms.

Knative is the topmost choice by 27% of the users.

Knative

As we were looking for a cloud-agnostic serverless solution where we are not cloud vendor locked and which can suit best our existing infrastructure stack [kubernetes, Istio, Kafka, etc.] we found Knative the best fit for our needs.

Introduction

Knative is an open-source project which provides a serverless experience layer on Kubernetes. Knative was initially developed by Google in collaboration with IBM, Pivotal, Red Hat, SAP, and nearly 50 other companies.

Knative adds all required components for deploying, running, and managing a serverless application on Kubernetes. The developer needs to create a function or service as a container image and let Knative manage it in a serverless fashion.

The overall serverless framework in Knative have two core components Serving and Eventing.

Serving Component

Knative Serving defines a set of Objects as Custom Resource Definitions (CRDs). These objects define and control how your serverless workload behaves on the cluster:

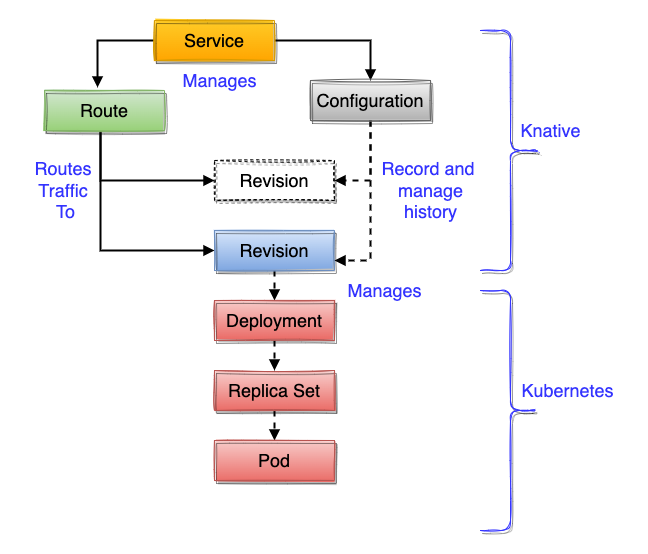

The primary Knative Serving resources are Services, Routes, Configurations, and Revisions:

Route: A Route provides a named endpoint and a mechanism for routing traffic to Revisions: These are immutable snapshots of code + config, created by a configuration Configuration: These acts as a stream of environments for Revisions. Service: Service is a top-level container for managing a Route and Configuration which implement a network service.

Knative Serving focuses on the below use case:

- Rapid deployment of serverless containers.

- Auto Scaleup and Scale down to zero as per the traffic.

- Support for multiple networking layers such as Ambassador, Contour, Kourier, Gloo, and Istio for integration into existing environments.

- Give point-in-time snapshots of deployed code and configurations.

- Allow traffic splitting across versions for use cases like A/B testing or phased rollout etc.

Eventing Component

Knative Eventing is the component that helps in developing serverless event-driven architecture-based applications.

Knative Eventing events are based on CloudEvents specifications, which enable creating, parsing, sending, and receiving events in any programming language using the standard HTTP POST method.

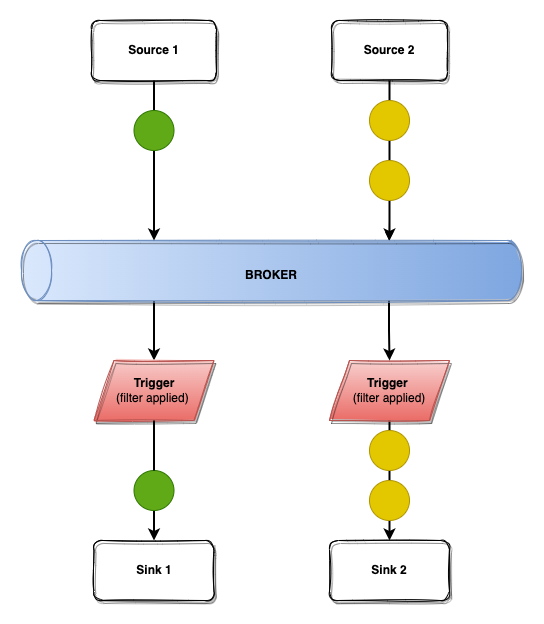

The primary Knative Eventing resources are Sources, Broker, Trigger, and Sinks.

Source: A source is an application that generates events based on CloudEvents specification.

Broker: It acts as an event hub where Sources send their events. Brokers provide a discoverable endpoint for event ingress and use Triggers for event delivery. Brokers can be In-Memory, Kafka and RabbitMQ, etc.

Trigger: From brokers, events can be forwarded to Sinks by using Triggers. Triggers allow events to be filtered by attributes so that events with particular attributes can be sent to Subscribers that have registered in events with those attributes.

Sinks: These are consumers of the events. Sinks can also respond to an event by generating a response event.

Serverless or Microservice?

There is no one answer to choose from and real-world use cases might need the best of both worlds.

For heavy, time-consuming, latency-sensitive, and highly predictable demand applications, microservices are well suited.

For piecemeal tasks like DB access or where you desire to quickly spin up applications without managing infrastructure, serverless could be a good option. Also for lightweight, flexible applications which can be scaled up and down quickly serverless make more sense.

So real business applications might need both microservices and serverless apps i.e Hybrid Application approach.

References

https://knative.dev/docs/getting-started/

https://www.cbinsights.com/research/serverless-cloud-computing/

https://www.cncf.io/wp-content/uploads/2020/12/CNCFSurveyReport_2020.pdf